The Pick

Year

2025

Industry

Sports & Entertainment

North Star

Make disciplined betting feel effortless by making intelligence conversational.

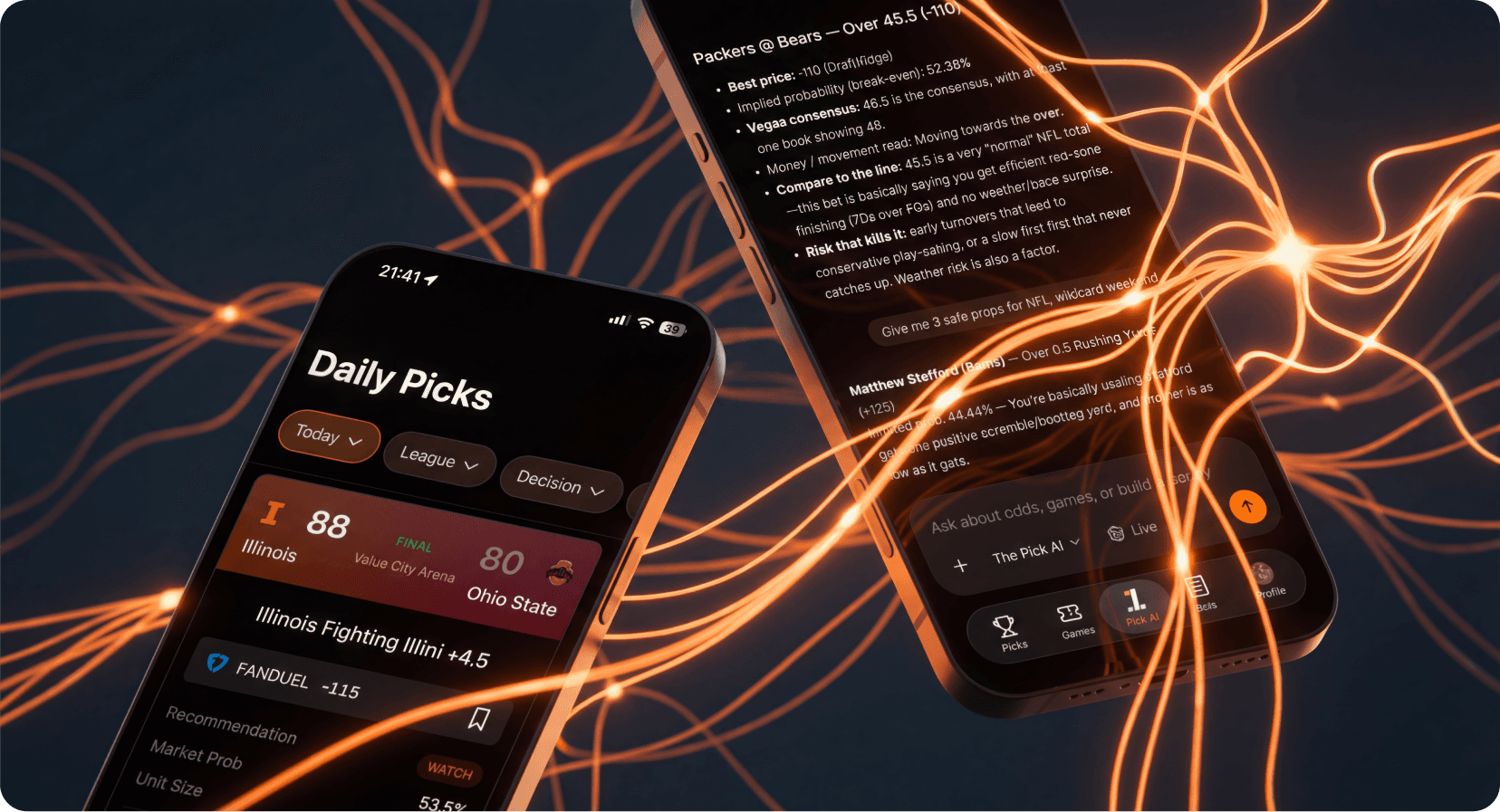

Every betting app on the market profits from chaos. Bright colors, flashing odds, impulse-driven interfaces designed to maximize bets placed rather than bets won. I designed The Pick to work differently, and the difference starts with a bet on conversational AI as the primary interface for complex, time-sensitive decisions.

Sports betting was a natural proving ground for that thesis. The frequency is high, the emotional stakes are real, and the existing tools assume people want to sit with spreadsheets and dashboards. They don't. Bettors talk it through. They text friends, argue in group chats, and make decisions in conversation. The product should feel like that.

Three design principles shaped everything that followed.

Cards over tables. One recommendation per game. The edge, the confidence score, and the reasoning are all visible at a glance. No drilling into data, no research rabbit holes. Thirty seconds and you know today's plays.

Chat for depth, not for everything. A LangGraph-powered agent lets power users go deeper on specific matchups, prop markets, parlay construction with correlation risk warnings, and odds comparison across more than twenty books. Most users just need the cards. The conversation is there when you want it.

Minimal by conviction. Dark, calm, structured. The product profits when users win, and the visual language reflects that alignment of incentives. Nothing about the interface encourages impulsive behavior.

I designed and engineered the entire platform. AI-assisted development was a force multiplier, but every architectural decision, every data model, and every deployment is mine.

The system spans a web app with card-based picks, a bet tracking workbench, and a conversational UI built on Next.js 15 and React 19. There are native iOS and Android apps with GraphQL codegen and in-app purchases. The conversational AI agent runs multi-step reasoning workflows through LangGraph with live odds tools, a parlay builder, and correlation risk detection. The prediction engine handles factor discovery, combination testing, weight optimization, and temporal validation using XGBoost and LightGBM. A trading runtime executes live against Kalshi with circuit breakers and Redis state management. The data pipeline ingests real-time information at five-minute intervals from ESPN, SportsGameOdds, and BallDontLie through FastAPI, Inngest, dbt, and Dagster.

That's three programming languages, five data sources, and seven dbt serving marts powering every screen in the app. The daily picks workflow runs a multi-stage pipeline scheduled twice daily, timed to each sport's rhythm.

The reason to describe the scope isn't to list technologies. It's to explain a design decision: when one person holds the entire system, from the mobile UI down to the data pipeline, every layer can be shaped by the same set of priorities. The prediction engine's confidence scores are formatted the way they are because the same person who built the model also designed the card that displays them.

Most AI picks products never publish their numbers, and the reason is straightforward: the numbers aren't good. In sports betting, the house edge on standard -110 lines means you need roughly 52.4% accuracy just to break even. Every percentage point above that is real, measurable profit.

I built the prediction engine to be judged by the only metric that matters: return on investment after the house takes its cut.

The system uses a factor discovery framework with strict architectural separation. There's an immutable evaluation harness that handles API integration and scoring. It never gets modified by the experimental process. The experiment configuration is what gets iterated, with git as the rollback mechanism. And there's a results ledger that survives resets and provides a complete audit trail.

The discipline layer is temporal validation. The system uses three evaluation windows with boundaries set at dates that fall between seasons for all four major sports, which prevents the models from learning patterns that only exist within a single season's data. All cross-validation uses expanding windows rather than shuffled folds, because shuffled validation in time-series data creates the illusion of accuracy by letting the model see the future. Feature selection is explicitly designed to avoid leakage.

The system progresses through structured phases: single-factor profiling, combination testing, weight optimization, cross-sport exploration, and then graduation to local ML with XGBoost and LightGBM when the simpler approaches plateau.

Model Performance: NBA Player Props

Prop Type | ROI | Sample Size |

|---|---|---|

----------- | ----- | ------------- |

Blocks | +32.19% | 4,663 bets |

Threes | +12.83% | 4,240 bets |

Rebounds | +9.23% | 4,834 bets |

Steals | +8.54% | 4,680 bets |

Assists | +7.52% | 4,295 bets |

Points | +4.70% | 4,961 bets |

That's over 28,000 backtested bets with positive ROI on every prop type. These aren't accuracy percentages. They're profit margins, measured after the house edge.

Product Traction

500 subscribers across Insider and Pro tiers

30% daily active rate, meaning nearly a third of subscribers open the app every day

Zero marketing spend. Every subscriber came through word-of-mouth from people who win.

Users text me winning bet slips. There isn't a better form of product validation.

The hardest part of building The Pick wasn't any single technical domain. It was the accumulation. Learning data science, ML pipelines, autonomous trading systems, native mobile development on two platforms, agent orchestration, dbt modeling, and Dagster orchestration each came with its own failure modes and its own moment where I considered moving on to something easier.

I stopped thinking of myself as a designer who codes the day I watched my Kalshi balance increase from predictions generated by models I'd built. The path from design to data science to autonomous execution was one I walked myself, and I tried a lot of things that failed along the way. Each failure pointed somewhere useful.

The Pick is what happens when someone who thinks in user experience and system architecture and data science keeps going when the domains get uncomfortable.

Eleven services, 28,000 backtested bets, and every subscriber came from word-of-mouth.